Looks like it might be fairly easy to differentiate between eyes closed and simulated “REM.” I simulated “REM” by rapidly moving my eyes with lids closed. Differentiating between simulated “REM” and eyes open, though, appears to be more difficult.

Here’s a recording of my left eye, just closed normally. I hope it’s typical of the eye when sleeping, but not in REM. The video is in gray scale at one quarter the actually color recording resolution, which is the usual input into the frame differencing algorithm referenced earlier.

https://drive.google.com/open?id=1FIW2_DkRBQ15w0BLeJCoPezV3HEEAKmV

(Sorry if you have any trouble viewing the MP4s. Video codecs which produce the MP4s on the Raspberry Pi are tricky. The format provided was the best I could do. It is viewable on my Windows machine, but may not be on Mac or other platforms. Looks like Google Drive’s video processing algorithms are having some trouble dealing with the format, so you will have to download the video to play it.)

Here’s a recording of the frame differencing algorithm run on the video with eye closed.

https://drive.google.com/open?id=1wo0GR7wlSoJ8XGUINnJbVmUVG74vvHwn

A black pixel in the image means that there is no movement detected for that pixel. A white pixel means that a great deal of movement was detected for that pixel. Some level of gray for the pixel means that movement was detected for that pixel. The lighter the shade of gray, the greater the movement.

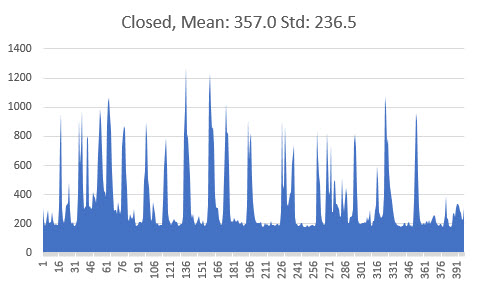

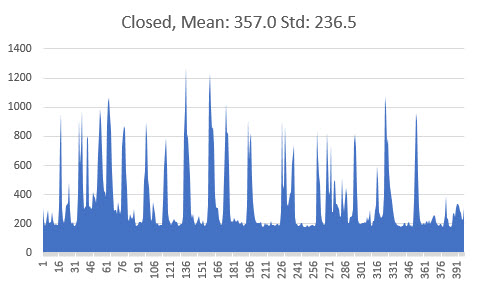

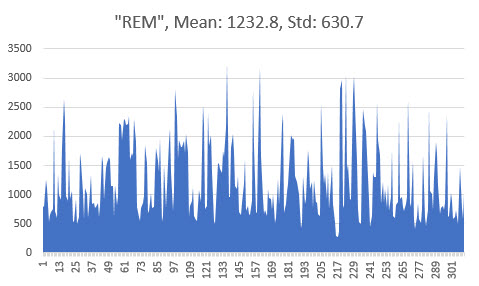

The frame differencing images are just two dimensional arrays, containing integers between 0 and 255 indicating a gray scale. 0 is black, and 255 is white. The magnitude, a.k.a. norm, of the image arrays were calculated for each individual picture and plotted in the graph below.

The X axis is the frame or picture number, and the Y axis a calculation of the magnitude or norm of all the array’s elements. With eyes closed, the mean of the magnitude was 357.0, and the standard deviation of the samples was 236.5.

Next, I simulated “REM” by moving my eyes rapidly with lids shut. Here’s a video of the simulation.

https://drive.google.com/open?id=1rM1YhRf6KRhc74l6zkQM-gD0KNF3NtV0

And here’s the associated frame differencing video.

https://drive.google.com/open?id=1dJ_vCNcvMQQMikQqbQYeajJlr_wV17zc

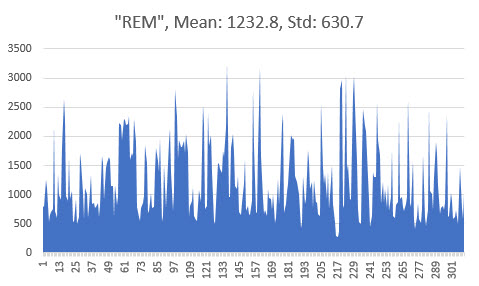

Again, the norm of the gray scale image arrays were plotted for each picture in the frame differencing video. The mean with eyes moving was much higher at 1232.8, as was the standard deviation at 630.7.

I ran the same experiment five times, with slight shifts in the camera location. (I cannot shorten the focal length of the camera any more without the lens falling out. The somewhat blurred image of the eye lid is the best that can be done presently, but it still seems to work fine.) The average norm of the simulated “REM” was always three or four times bigger than that with eyes still and closed. The standard deviation was always two or more times bigger as well.

Consequently I think it might be possible to detect actual REM using a rolling average. For instance, if the rolling average of maybe 100 frame difference samples, over a period of roughly five seconds, is greater than a threshold of about 800 then REM may be likely.

Unfortunately detecting eyes open, perhaps upon waking during the night, appears to be much more challenging. Here’s a video of an open eye, looking around a bit, with a good deal of blinking.

https://drive.google.com/open?id=1XVXqfyedTvdxOUpAdrCD84wuZ5KQkGtI

Here’s the associated frame differencing video.

https://drive.google.com/open?id=13Th7OSVAd3rbHGeP9lDbgV2CEHaxhJnc

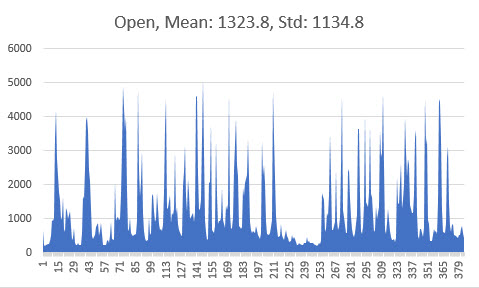

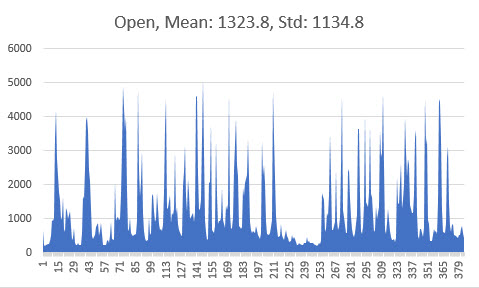

Like before, the norm of the image arrays is plotted in the graph below.

Unfortunately the mean magnitude of the arrays is usually pretty close to that of the simulated “REM.” (However, the standard deviation appears to be larger, and the peaks tend to be higher.) Such calculations though, does depend upon what the subject does when their eyes are open. This might not be terribly predictable or uniform. Past REM detecting sleep masks usually skip this eyes open use case, perhaps for good reason!

Other methods might be deployed to determine if the eye is open, such as circular object detection of the pupil and iris, should the the Raspberry Pi Zero have enough processing power.

https://docs.opencv.org/2.4/doc/tutorials/imgproc/imgtrans/hough_circle/hough_circle.html

Also it was common for earlier lucid dreaming masks to ask the user to indicate that they are going to sleep. No dream signals are sent until its likely that they have fallen asleep, after a certain amount of time has passed.